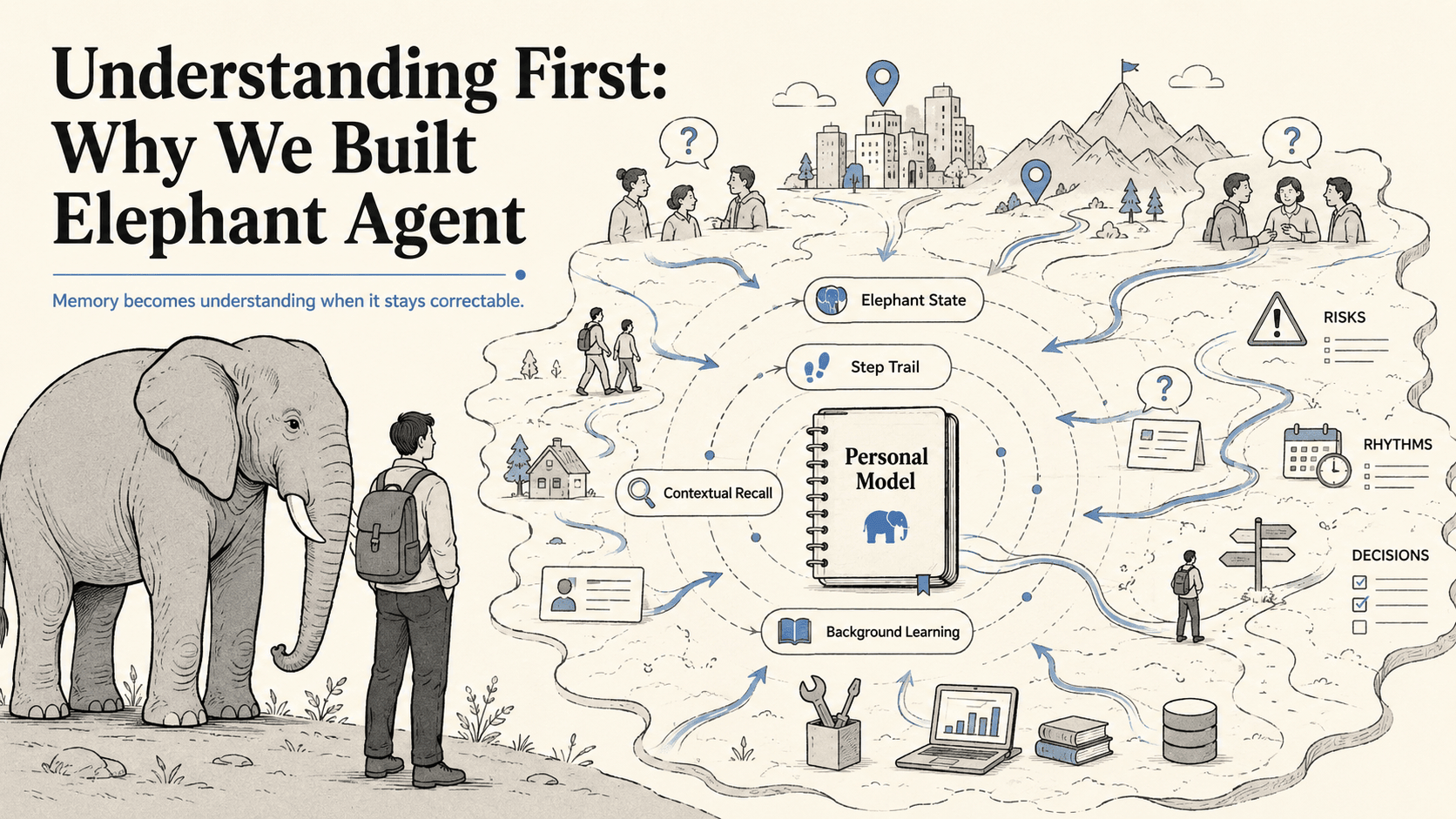

Understanding First: Why We Built Elephant Agent

Elephants never forget, but the interesting part is not storage.

An elephant does not remember like a hard drive. It remembers like a living being. Its memory is social, emotional, spatial, survival-aware, and practical. It recognizes members of the herd by sight and smell. It remembers danger cues. It returns to important places long after the last visit. It carries the difference between a safe path, a risky signal, a trusted companion, and a dry season that should not be repeated.

Older matriarchs make this visible. A remembered drought is not a line in a database; it is judgment the herd can move with. Elephants can recognize other animals and humans after years apart. Their hippocampus links emotion to long-term memory. Their cerebral cortex supports problem solving, cooperation, tool use, and quantity tracking. They communicate across distance, comfort each other, protect the young, and respond to loss with a tenderness that makes memory feel close to care.

That is the inspiration for Elephant Agent: not an AI with more memory, but an AI whose memory can turn lived context into better judgment.

The first wave of personal agents is learning two useful things. Memory matters. Skills matter. A personal AI should be able to recall what happened, and it should become more capable over time.

But neither is the center.

Raw recall answers: what can be retrieved from the past?

Skills answer: what can the agent do?

Personal AI needs a third object at the center: what does the agent currently understand about the person, and how can that understanding be corrected, deepened, or questioned?

Elephant Agent is built around that object. We call it the Personal Model.